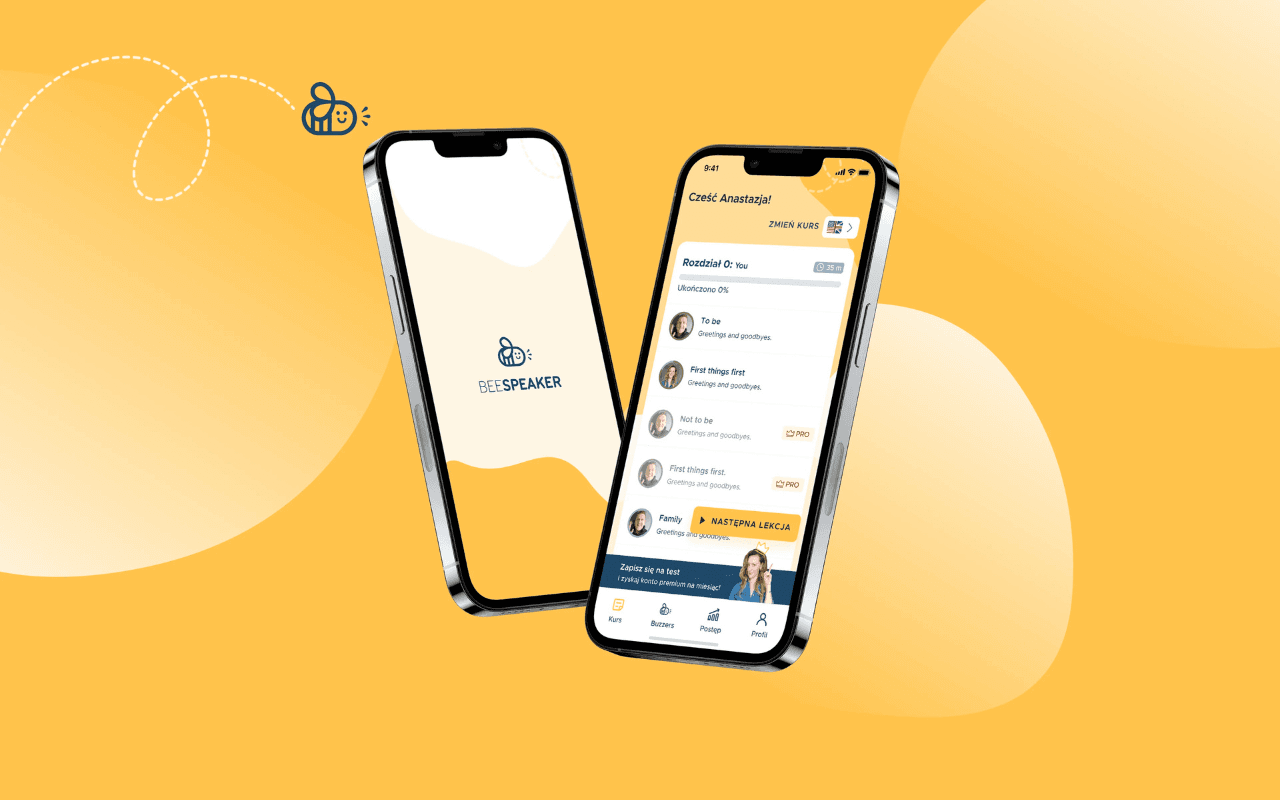

BeeSpeaker is an innovative EduTech startup transforming how people learn foreign languages by eliminating the biggest barrier — fear. We partnered with them to create a mobile app that combines speech recognition technology with engaging video content from native speakers. The app learns from each user's performance, adapting difficulty levels in real-time while enabling voice-controlled navigation. The result is a revolutionary language learning tool that has attracted 3 investors, achieved 4.7+ star ratings across platforms, and generates 1.3 million monthly user interactions.

What We Faced

BeeSpeaker identified that the biggest obstacle for language learners is fear — fear of speaking, fear of incorrect pronunciation, fear of judgment. Traditional language apps don't address this psychological barrier. The startup needed a partner who could not only build an app but deeply understand user psychology and craft a digital product that delivers genuine value. The challenge was creating an experience that feels safe enough for users to practice speaking while providing accurate pronunciation feedback, all while developing technology compelling enough to secure startup funding and investor confidence.

How We Solved It

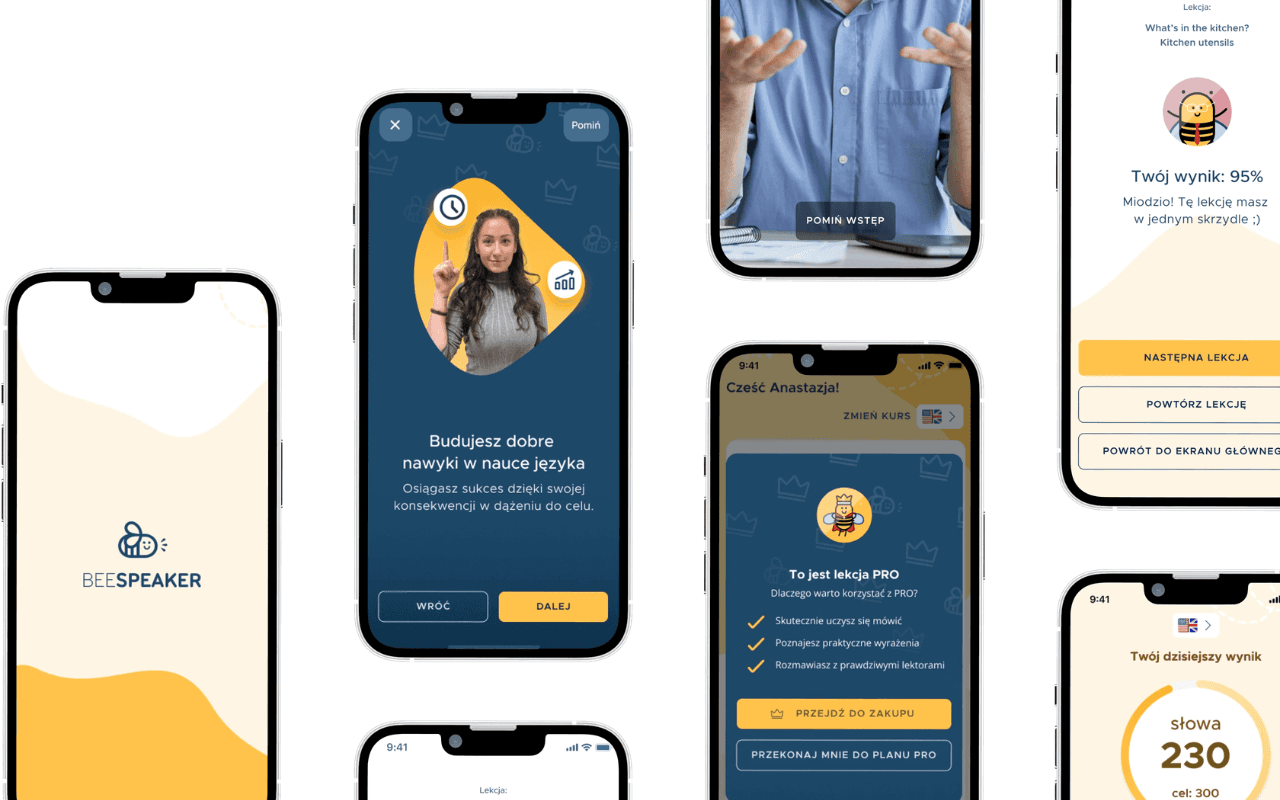

We built BeeSpeaker as a cross-platform Flutter application with a sophisticated AI-powered voice recognition engine at its core. The app teaches languages through interactive videos featuring native speakers, with a unique twist — the interface is controlled by spoken words, creating an immersive speaking environment from the moment users open the app. We implemented a self-learning speech recognition system that adapts to each user's pronunciation patterns and provides real-time feedback. An intelligent algorithm displays exercises at appropriate difficulty levels based on current user performance, ensuring continuous progression without frustration. Gamification elements keep learners engaged and motivated. The entire journey — from strategic planning through intense workshops to triple QA checks — ensured a polished product that exceeded both investor and user expectations.

What We Delivered

Cross-platform Flutter application with AI-powered speech recognition, adaptive learning algorithms, video content delivery, and gamification engine.

How We Got There

Discovery & User Research

Conducted meticulous analysis of consumer needs and expectations through user interviews and market research. Identified fear of speaking as the primary psychological barrier. Documented user journeys and pain points across existing language learning applications.

Strategic Planning & Workshops

Facilitated intense strategic workshops to align on product vision and technical approach. Developed the concept of voice-controlled interfaces as a solution to speaking anxiety. Created detailed product roadmap and feature prioritization.

Visual Identity & UX/UI Design

Designed a warm, encouraging visual identity that reduces anxiety and promotes confidence. Created intuitive interfaces that make voice control feel natural. Three UX/UI designers collaborated on crafting the human-app interaction that feels entirely new compared to other learning tools.

AI & Flutter Development

Built the cross-platform application using Flutter with three dedicated developers. Implemented self-learning speech recognition algorithms with an AI engineer. Developed the adaptive difficulty engine that personalizes content based on user performance. Integrated video delivery infrastructure for native speaker content.

Testing & Investment Preparation

Conducted triple QA checks ensuring flawless performance across devices. Prepared the application for investor demonstrations, contributing to successful acquisition of 3 investors. Post-launch monitoring and iteration based on 1,000+ in-app reviews.

Visual Showcase

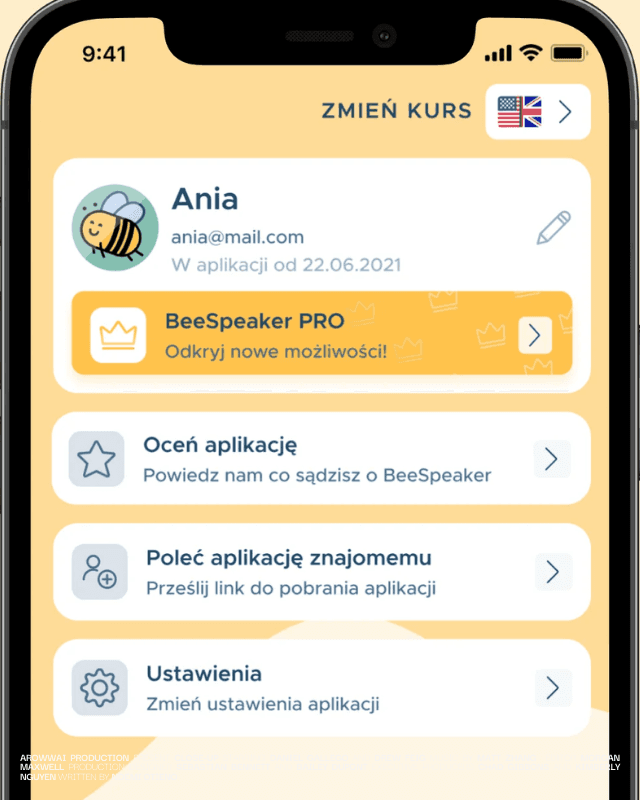

Users Authentication sign UP / Log in Functionalty

Multi Langual Support To Grow Internationally

Multiple Feactures According To User Needs

“The Human-App Interaction is something entirely new in comparison to other language learning tools. The most challenging part was to make this new approach obvious and intuitive for the end users. We achieved that through careful design and expert collaboration.”